Introducing Orbiter

A generative ambient music system

Building great software in 2026 still demands engineering discipline, but it also feels more like an art form than it ever has for me. Worrying less about punishing implementation difficulty leaves room to wander, imagine, and experiment with things that felt borderline impossible before. Orbiter is my best attempt at that so far. I hope you enjoy playing with it.

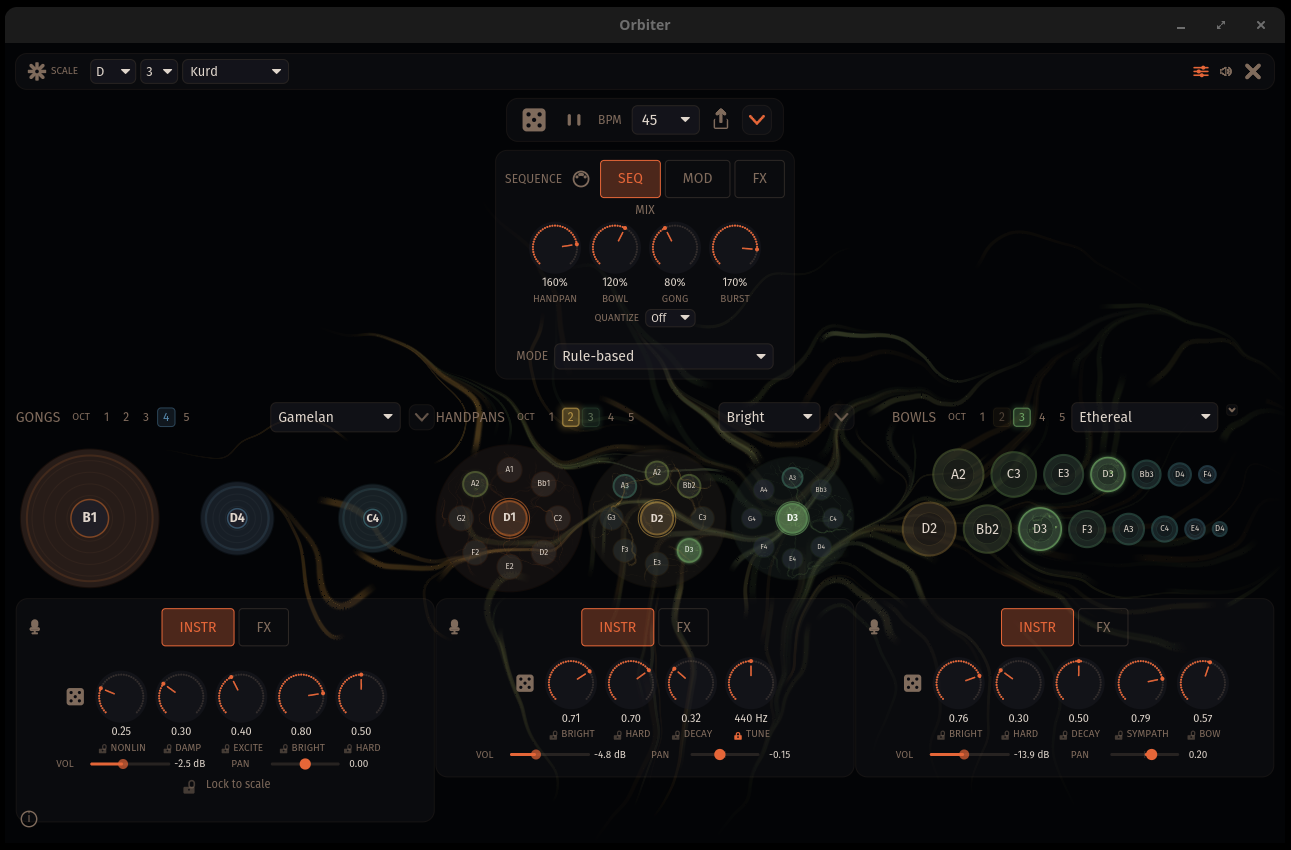

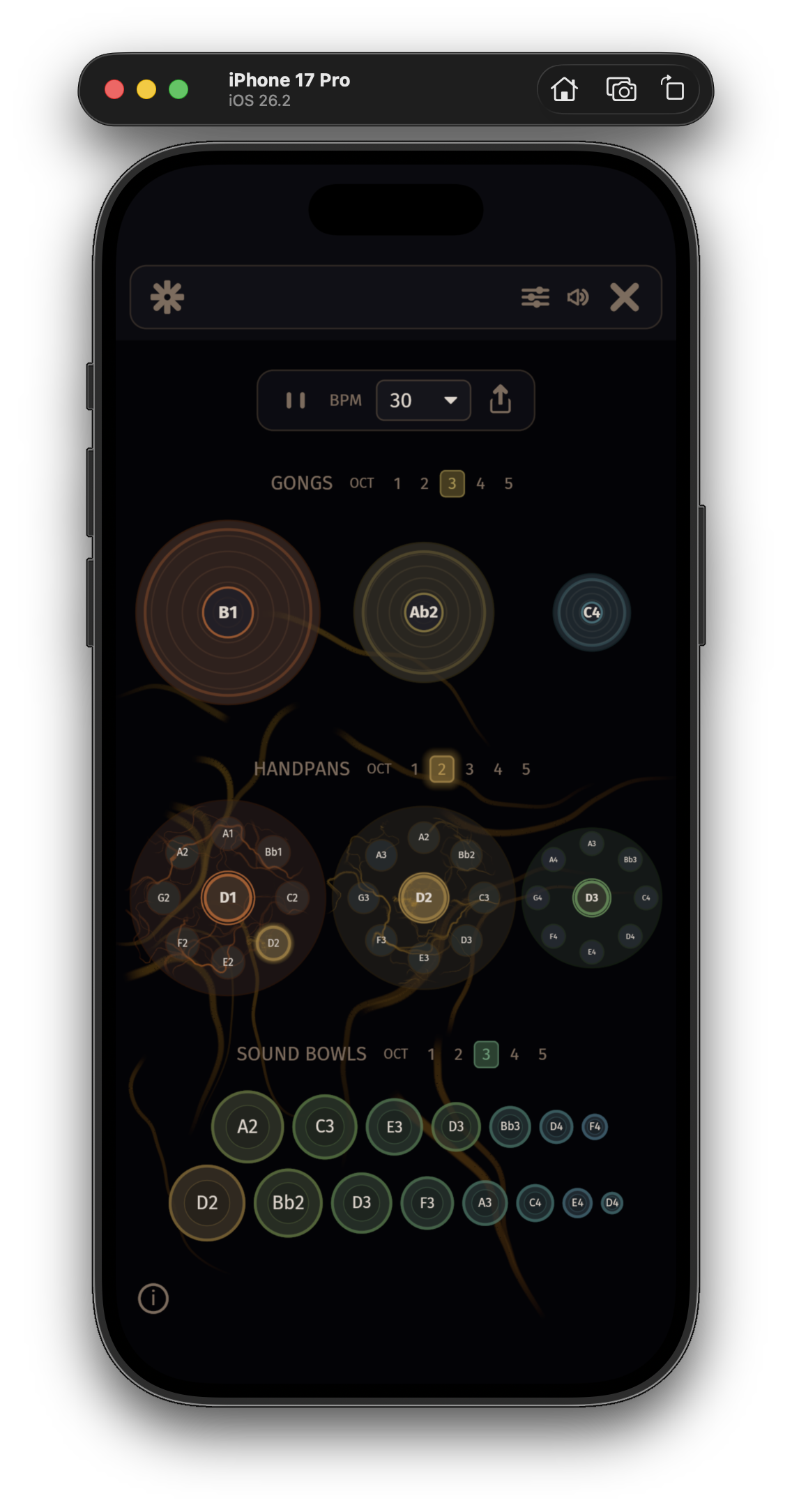

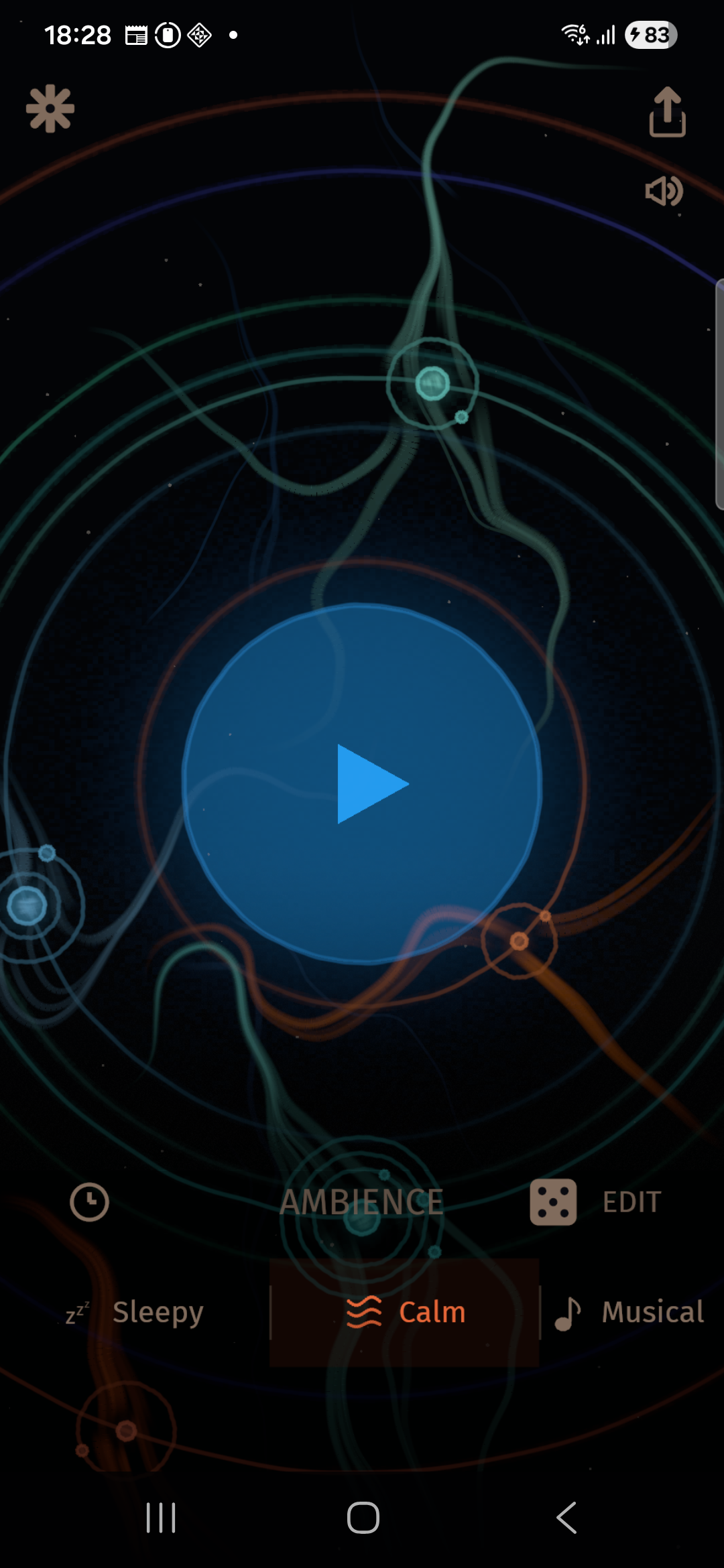

Orbiter is a generative ambient music system. Its built-in sequencer composes meditative music in real time, or you compose together with it — driving physically modelled instruments. At this point there are three instruments available in the suite: handpans, gongs and singing bowls, but I am building some more.

This app is my best take on a few specific physical modelling synthesis methods as someone who is still learning. Turns out that as an indie developer I can now build and deploy an ambitious cross-platform suite of software, powered by something as hard as real-time audio digital signal processing (DSP) and on-device machine learning (ML) inference, across web, desktop and mobile platforms, with native technologies in use everywhere.

Here it is, running live in the browser (click Play to set it off):

The thing is, Orbiter also runs on macOS, Windows, Linux, iOS, and Android — with VST3, AU3, and CLAP plugins for DAW integration. A follow-up post will cover how I approach going cross-platform to this degree (in a few words, Rust and wgpu).

Really Orbiter was initially a series of related experiments, focused on the following three challenges:

- Realising a software instrument that feels, sounds and looks immediate, beautiful, and strange in the right kind of way, on all the supported platforms.

- Showcasing physical modelling realtime DSP and on-device ML inference across web, desktop, and mobile.

- Shipping a single codebase as a web app, and as a standalone desktop and mobile app, and a set of DAW plugins simultaneously — without relying on Electron, Tauri, or other webview-based approaches.

Physical Modelling

The flavour of immediate and strange synthesis method that I was drawn is physical modelling, in particular modal synthesis. Perhaps because I play the cello (and lately the String Armonica mkII) and have enjoyed tinkering with electroacoustics (MIDI controlled solenoids and the Microphonic Playground for the win), it feels interesting to build an instrument that both takes inspiration from something that really exists in physical space, but is impossible, unreal in some way (for example, its physical properties can be modulated / automated over time).

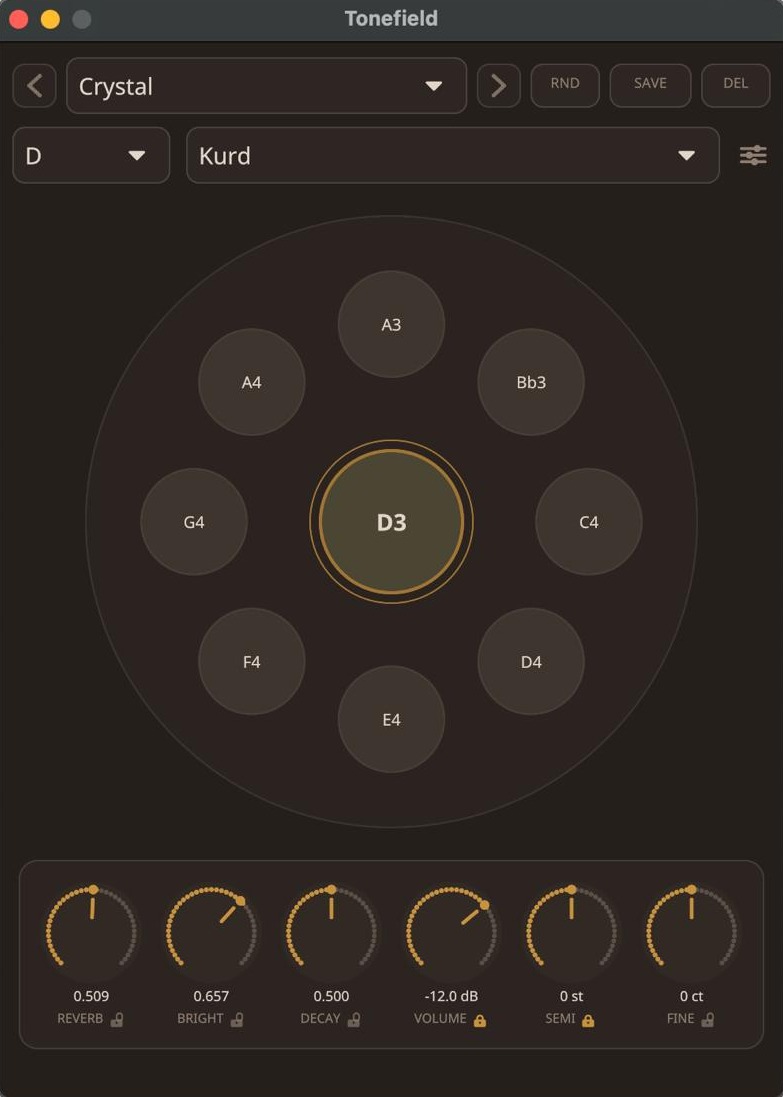

Handpan seemed like an interesting starting point for exploring this: (a) I love the sound, (b) a model that captures a bit of its character is relatively simple and low in computational complexity, and (c) there are lovely, oddball scales involved (Hijaz, Kurd, Pygmy, to name a few).

I built the first prototype of these ideas using the Rust based nih-plug (with iced), as a DAW plugin, and tested with Ableton Live and Bitwig. The nih-plug provied standalone app distribution mechanism provided a handy way to prototype the feel of the app as a standalone app.

An early demo — handpan in D Hijaz.

On hearing it I was hooked and wanted to expand to additional, more complex physical models: a gong, and a bowed singing bowl, based on research literature. Each instrument reflects a different, relevant physically inspired principle:

Handpan

Gong

Singing Bowl

- Handpan — uses modal synthesis with biquad resonators modelling individual vibrational modes. I found Alon (2015) "Analysis and Synthesis of the Handpan Sound" particularly useful — it provided mode frequency ratios and decay times, the detuned mode pair structure that gives handpans their shimmering sustain, Helmholtz cavity coupling for warmth, and an excitation model. There is still a lot more to explore in that thesis alone.

- Gong — also modal, but the modes interact with each other. The challenge is capturing the pitch-shifting swells and rising shimmer as energy cascades from lower to higher modes after a strike. This is modelled using the Föppl–von Kármán equations for large-deflection plate vibration, where the coupling between modes grows with amplitude (hence "nonlinear" — hit harder and the modes interact more). The approach follows Bilbao et al. (2023) "Real-Time Gong Synthesis" (DAFx-23).

- Singing bowl — combines modal resonance with an elasto-plastic friction model (Dupont et al., 2002) for bowing. The challenge is the sustained, slowly wavering tone produced by stick-slip friction when a rim is rubbed — the puja mallet alternates between sticking and slipping, driving inharmonic modes into self-sustained oscillation.

Once I got the app running both standalone on the web and as a DAW plugin, ideas began to flow. How about…

- Sharing a sequence state by URL: especially since I can ship this thing for real on the web, what if I could send the sequence I'm hearing to someone else in that same exact state and we could just sit there and enjoy it together? Or explore the sound together, collaboratively, changing the scale, or instrument and FX parameter knobs together in real time?

- What if each parameter had slightly chaotic, out-of-sync low-frequency modulation, so the sound slowly breathes and evolves on its own? Who doesn't love a good slow-evolving, complex modulation where you discover new nuances as you concentrate (omgomgomg, one day I want to reach Triple Sloth Eurorack module grade dream state with the Orbiter modulation system too).

- Patch randomisation: what if each random sequence produced by the app (identified by its random seed) also had its own unique set of instrument, scale type and root note parameters? Give also a fast way to randomise individual instrument parameters.

- Since the rule-based sequencer is so much fun, wouldn't it be nice to drive a DAW like Ableton or Bitwig with MIDI output from the app, with MIDI clock or Ableton Link based sync?

- A playback timer, so you can relax and fall asleep to it.

- … and so on.

Slowly (I mean, not that slowly) the exploration revealed the definition for a musical tool with a pretty coherent shape, with a lot more to it than I thought I would be able to put together. The biggest surprise discovery though was still to come, and that is something I am calling a neural sequencer.

Neural Sequencer

Algorithmic composition has a long history — from Mozart's dice games through Xenakis's stochastic music, Brian Eno's generative systems, and more recently neural approaches like Music Transformer. A generative process created with a small symbolic music language model baked into a tiny web based, or entirely native, app bundle, with battery friendly, GPU accelerated inference felt like quite an interesting challenge.

I trained a novel, autoregressive transformer model (a "small language model", or perhaps an agentic composition assistant, to be 2026-compatible) for Orbiter, and it is indeed bundled in the app: it generates notes with velocities and durations, either autonomously or in response to notes you play. The model architecture, training method, and data augmentation methods I developed for Orbiter are going to get a post of their own at the point I feel I have explored this in full.

There are three different generative sequencer modes bundled in the app:

- Simple — deterministic patterns where the same URL and system clock always produce the same music, making every session shareable by link. This is what you hear by default.

- Muse — the model generates notes continuously on its own, streaming an evolving composition based on the current scale and tempo.

- Duet — play notes to prompt the model, which responds with complementary musical phrases (a call and response type behaviour).

Once I had built the Duet mode, suddenly the MIDI in & out capabilities in the app came to full bloom, opening a whole new set of possibilities: I could use this thing to help generate ideas in my choice of DAW (Ableton, sometimes Bitwig), with all the power of its built-in instruments and effects and my plugin arsenal!

Realtime Collaboration

The Orbiter sequencer state with all the instrument parameters can be transmitted by sharing a link. However, realtime collaboration too is actually super simple to build from scratch these days, so I decided to add a little experiment in there: you can invite others to explore sound with Orbiter together in real time — in the browser with no install, or from the desktop and mobile apps. I am hoping that this can drive for example one person teaching other(s) how the instrument works, changing scales, twisting knobs, and one can see and hear the effect instantly. Basically, a zero-friction way to explore sound together.

Under the hood, the entire application state — every knob, the scale, root note, sequencer seed — is synchronised between users via a CRDT (conflict-free replicated data type), so concurrent edits merge automatically. Modulators still run locally, so the sound breathes independently for each listener. I intend to open source the collaboration server I developed for this purpose separately.

Available Everywhere

Orbiter runs on iOS, macOS, Windows, Linux, and Android, as well as in the browser. It is also available as a plugin: VST3, Audio Unit v3, and CLAP are all supported, so you can bring the generative engine directly into your DAW workflow.

Handpan melody, through the gong resonator.

Gong through all three Orbiter effect plugins — no other plugins or processing.

Technically, this meant addressing low-latency audio and GPU-accelerated rendering using native GPU libraries (Metal, Vulkan, DX12) on native platforms, and even in the browser (WebGPU with a WebGL2 fallback), with WebGPU acceleration and a CPU fallback for ML inference across all platforms. Rust and its WASM-targeting ecosystem turned out to be crucial: native performance across every platform, with first-class compilation to WebAssembly for the browser.

The lesson I take from building Orbiter this way is that even with a tough systems programming language, creativity really blossoms when you are equipped with a well thought through agent harness. As long as I am pretty damned meticulous about that harness and the upfront degree of automated testing, I am freed from being quite so level-headed about implementation risk and limits of my capacity to realise something complex. For the first time in my life with a realtime audio related project, I felt the It will be easy, probably just a quick weekend project

moment. As much as that was a fallacy (always is), it is also a sign of having hit a fun problem when you realise that now is too late to give up on it (I love a good moment of "Schlep blindness").

At any case, this is the first in a series of posts about Orbiter. Next up: Cross-platformish Engineering in Rust — how one codebase runs across browser, desktop, mobile, and DAW plugins, alongside open source infrastructure I intend to release to help others build in a wildly cross-platform way like this.